RAG (Retrieval-Augmented Generation)

RAG (Retrieval-Augmented Generation) is the way to integrate knowledge outside your model’s training data into your LLM. A prime candidate for this is “Private Documents”.

RAG makes it possible for an LLM to answer a question like: Is my LG TV's warranty still valid for intermittent screen flickering issues? Without knowledge of your warranty documents, the LLM has to ask you follow-up questions like When did you buy it?. With RAG, the LLM will find the warranty, read the T&C and purchase date in relevant documents, reason with that date, and maybe make a web_search tool call to review other people’s experience when the warranty is still valid but the screen flickering issue is covered.

Since I love private and local AI, RAG is a big deal for me; it is also the bridge between generic LLM knowledge and an LLM that knows you and can integrate that knowledge into its reasoning.

Flow

User Query

↓

+---------+

| LLM | (decides it needs info)

+---------+

↓

+---------+

| RAG | (retrieve relevant data)

+---------+

↓

Retrieved Context

↓

+---------------------------+

| Context + Original Query |

+---------------------------+

↓

+---------+

| LLM | (generates answer)

+---------+

↓

Final Response

Inside the RAG

A simple RAG would work something like:

Query → Embed → Search → Context

Complete Flow:

User → LLM → RAG (Query → Embed → Search Results) → Context → LLM → Answer

Calling RAGs

There is no magic here, just like many other things, LLMs have one real interface to do things, which is tool calling. That’s exactly how RAGs and LLMs work together. The LLM calls a tool, which is an API for the RAG. It can be MCP, it can be simply a CLI, or it can be a function within the agent process (aka function calling); these are all the same concept.

Calling a RAG and calling an MCP’s web_search tool would be exactly the same pattern from the LLM’s point of view.

There is also a pretty cool tool called MCP Inspector to test MCPs which simplifies the debugging and testing your own MCPs. npx @modelcontextprotocol/inspector

Embeddings & Vector Databases

Theoretically your RAG can be just a simple keyword search on an SQLite database, but that defeats the value. What you want is the semantic search within your documents, this is where the embeddings come into play.

Embeddings encode meaning therefore allow you to find similar meaning results rather than a simple keyword search.

Before running the search query coming from an LLM, the RAG creates an embedding and runs it in a vector database. Vector databases are exactly what you think: they take words, convert them into embeddings, and find the nearest vectors using various algorithms in their index (which is also a bunch of embeddings created during the indexing phase).

Theoretically you can put all of the internet index into one big vector database and do semantic search for the online results… yes very very theoretically but wouldn’t it be cool!

Before I explain further, two things to clarify:

- Pinecone, Qdrant, and Chroma DB are popular vector databases to check out

- Embeddings are created with an embedding model. i.e.

qwen3-embedding:4bor something super light like nomic-embed-text with only ~300m parameters!

Search

This is something a vector database takes care of, but these are the high-level algorithm classes. They are important to understand for building and using an effective RAG.

1. Vector Similarity Search

We already covered this: semantic search. I won’t get into the algorithms for this because I don’t really understand them in a way that I can explain.

2. Keyword / Lexical Search

This is the old-school keyword search with fancy algorithms that you would find in any database; the most common lexical algorithm used here is BM25.

3. Hybrid Search

Because we want the most accuracy out of our searches, production RAGs use both 1 and 2 to deliver the most relevant results.

Your keyword is searched with a lexical search and a vector search, and both relevancy scores are used to calculate the best match and return the results in that order. Which brings us to Re-ranking.

Re-Ranking

It’s pretty much standard practice in RAG pipelines to re-rank after getting the results.

Re-ranking takes the results and re-ranks them. Re-ranking is slower than these searches but more accurate. Since we run it on a limited set already filtered from the database, we can afford to be slower and happy to do the accuracy/speed trade-off.

This is the flow

Query

→ Search (top 50)

→ Re-ranker scores each result

→ Sort by score

→ Keep top 5–10

→ Send to LLM

Again, keep in mind all of this can be however you want, effectively this is a simple interface between your LLM and RAG. It can be as dumb as you want it to be, or as complicated and specialized for you as you want it to be.

The way you put these things together is referred to as your RAG Pipeline.

I haven’t spent time on re-ranking yet in my RAG, so I won’t cover much about it at the moment. The idea is explained, and here are 2 articles on my read list to implement and play around with. Important keywords for further research are ColBERT and Late Interactions.

BTW, Fuck Medium and any platform that requires you to log in to read random blog posts, and shout out to anyone who uses Medium, get the fuck out of that stupid fucking blogging platform.

RAG Pipeline

We’ve covered how we interface with a RAG, but we haven’t covered how we build the bloody thing in the first place to search within it!

A complete RAG Pipeline would be something like this:

Ingest Data → Query Data → Re-rank

We’ve talked about Querying and Re-ranking; let’s talk about building the index.

Ingestion of Data

You can put any document into your RAG. The most common examples are just a bunch of documents (PDFs, word documents, API documentation, notes, images), but you can do other stuff like scraping 5 websites relevant to a niche topic, indexing all of it, and then even fine-tuning your LLM. Suddenly you have an expert-level LLM Agent that specializes in that topic and will be arguably better than any other generic model.

Common Ingestion Flow

- Read the Source Data (simplest would be file system, or it can be an inbox via IMAP)

- Normalize (Clean, De-dupe)

- Chunk it (we’ll cover chunking, for now; it’s just turning a document into smaller pieces)

- Create Embeddings (Call an embedding model to create embeddings)

- Save the results to a vector database

This creates your database. You can imagine there are other things that need to be done in a production system, such as ensuring your index is up to date.

Let’s quickly double click to Step 5: Saving results to Vector DB; You need to set up your database with the search algorithms you want, and then create them and insert the data into the DB.

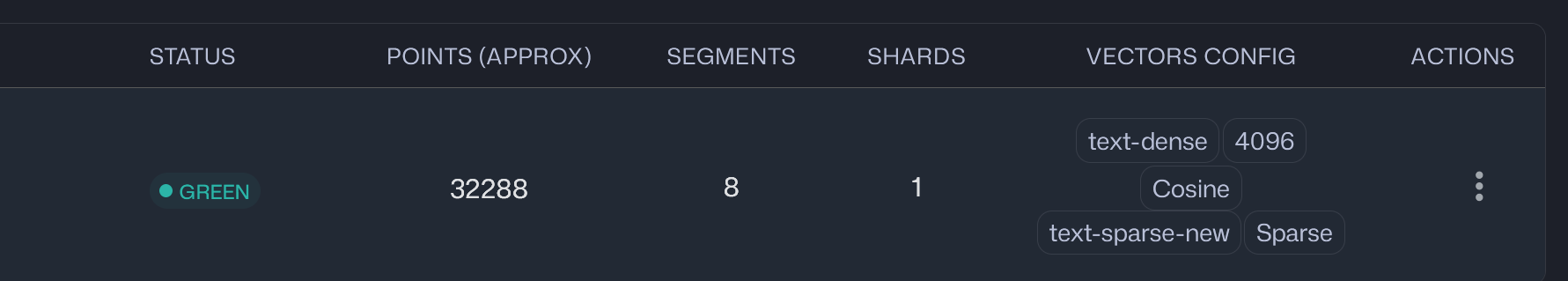

You’ll see something like this in your database (screenshot from Qdrant dashboard):

Points (think of it like a row in relational DBs). Vector Config shows which algorithms the collection (think of it like a table in relational DBs) is optimized and indexed for. If you want to add a new way to search, you generally have to rebuild the collection for it.

For the sake of being familiar with the terminology, Sparse is lexical / keyword search and dense is semantic search. While sparse is lexical, it’s still not exact keyword search that we use in an SQL query; it still uses algorithms like BM25. While not vector-based, it’s still a relevancy search that uses tokenization.

Chunking

Chunking is splitting documents into smaller chunks.

More importantly, why do we chunk?

- Documents can be fucking big; we don’t want to store a 50MB PDF as one single embedding

- Retrieving only the relevant information (because [[AI-Best-Practice#Memory & Context|Context is everything]])

This means one document might create multiple chunks, and you can change the chunk size. This also means when you query, you can get multiple chunks from one document; you have flexibility to approach this however you want.

Embedding and Model Families

It’s a good practice to pair your main model and embedding model. i.e. Qwen3:30b with qwen3-embedding:4b.

Don’t get confused about how we use the embeddings, we still use keywords from LLM and not embeddings while querying something. The idea is both models think about the queries and results the same way, therefore their usage of the keywords and words will be similar to each other and less prone to confusion of meaning.

This is a small detail and shouldn’t make much difference in practice, but this is why it’s suggested as best practice.

Metadata

All the extra information you can have about a document, you want to save it as well. This drastically differs based on your ingestion sources.

Examples:

- For file system sources:

- Folder Name

- Last Modified Date

Generating Useful Metadata

You can generate metadata while indexing instead of just reading it, such as id and hash, which will help you keep track of things for de-duping and simply referencing files. Your query interface will be working in chunks; generally you’ll want to allow the user access to the raw document as well. This extra metadata that you create and store with the points will help you do those things.

Other complex examples are stuff that you want to process once and just store the results. A great example is the language of the document; you can determine it easily while indexing with a small model and just store it as metadata. This will be super useful while filtering.

Some examples:

- Summary of the document/chunk

- Document’s Department (i.e. which department this document belongs to)

- Owner

- From Email address (while indexing an email source)

- All the data relevant to ACLs (explained in the next section)

- Recency information

Basically, whatever might be useful for filtering or can be slow to process needs to be done once while indexing.

ACLs & Permissions

It’s a RAG’s responsibility to enforce access controls. What you don’t want to do is dump sensitive context into the LLM and then hide it from the user. There are various ways and reasons that your LLM will expose that data. If you need some control over data, your only option is to not inject that data into the context.

Enforce permissions at RAG level. If not needed, don’t index; if needed, enforce it at the LLM interface layer. (OAuth solves a lot if you need auth information and is well supported and used by a lot of libraries).

Metadata Filtering

By introducing the ability to filter based on the metadata, you can expose these filters to your LLM and it’ll utilize them as it sees fit.

Calling RAG from your LLM

You can do this in many ways, but I think creating an MCP for your RAG is the best solution for experimenting.

- MCP will let you set up tools such as

document_search(query, top_k)andread_document(id) - Because a lot of AI Agents, harnesses, and tools let you hook into an MCP, you can just make your RAG available to them (i.e. just connect it to Codex or Claude Code by configuring it in MCPs)

Once it’s accessible for tool calling, just set up your call definitions based on what you want your LLM to do.

References

Some Git Repos on my bookmarks

Remember you can just checkout a repo, open something like Codex and ask it to explain X,Y,Z to see how a solution approaches to this problem.

- Klee: Local Desktop AI with Built-In RAG

- monkeSearch: Local Natural-Language File Search

- rust-local-rag: Rust MCP Server for Local RAG

- Qdrant: Vector Database and Search Engine

- file-brain: Smart Local File Search Engine

- MegaParse: LLM-Optimized Document Parser

- FACT: Tool-Based Retrieval Without Vector Search

- Haystack: Open-Source LLM Orchestration Framework

- txtai: Semantic Search and LLM Workflows

- kotaemon: Open-Source RAG Chat for Documents

- 2nd-Brain: Desktop Personal Knowledge Base with RAG